Description

The purpose of the robots.txt file is to tell the search engine web crawlers how to process your website for indexing. The file robot.txt needs to exist within your project and needs to live in the root folder. Normally, on a plain .NET website, a developer's task would be to maintain the contents of the robots.txt file. However, with Episerver, it's doable without needing to add this to a developer's task. When we work with CMS platforms the aim is to manage everything editable, including robots.txt. Please see below for further instructions.

Resolution

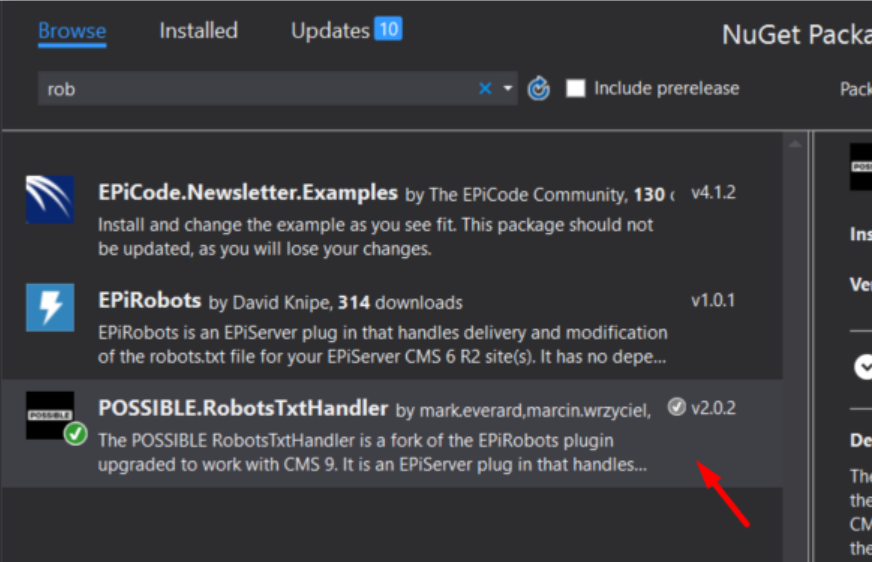

The plugin you would be able to use can be downloaded in your NuGet packages, its name is 'POSSIBLE.RobotTxtHandler' and you can download the source code from here (link to GitHub):

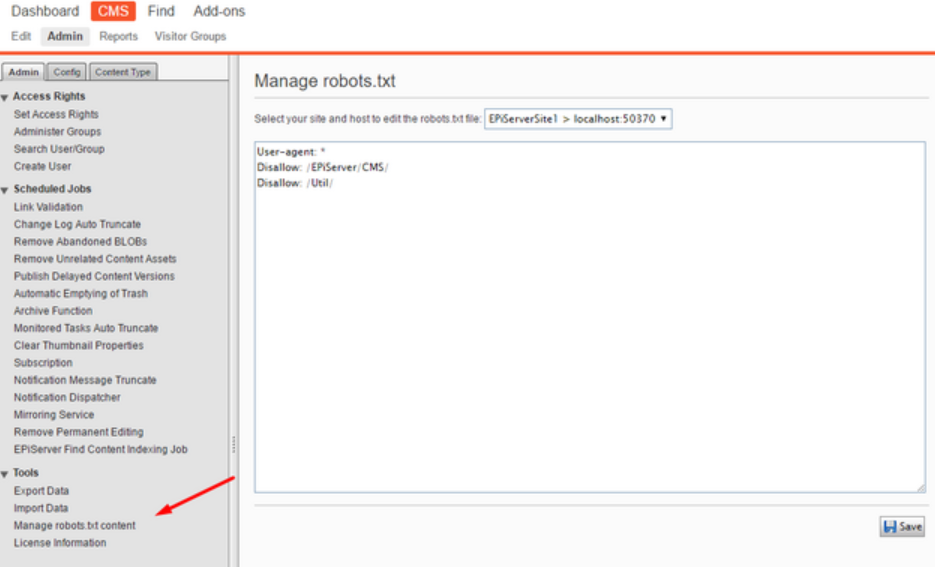

After installing the Robots.txt plug-in, if you load up your Episerver website and visit the admin section, you will see a new entry in the left-hand navigation menu. From this screen you will be able to edit the robot.txt file:

Please sign in to leave a comment.