- Optimizely Web Experimentation

- Optimizely Personalization

- Optimizely Feature Experimentation

- Optimizely Full Stack (Legacy)

Use multi-armed bandits (MABs) in Optimizely Experimentation to maximize traffic to your winning variations. MABs differ from A/B tests because they do not generate statistical significance and do not use a control or baseline experience. Instead, the MAB results page focuses on improvement over equal allocation as its primary summary of your optimization's performance.

Why MABs do not show statistical significance

With a traditional A/B test, the goal is to collect data to discover if a variation performs better or worse than the control. This is expressed through the concept of statistical significance. Statistical significance tells you whether a change had the expected effect so you can improve your variations each time. Fixed traffic allocation strategies reduce the time needed to reach a statistically significant result.

Optimizely Experimentation's MAB algorithms aggressively push traffic to whichever variations are performing best because the MAB does not consider the reason for that superior performance important. MABs ignore statistical significance, so the results page does not contain statistical significance. It avoids confusion about the purpose and meaning of MAB optimizations.

Why MABs do not use a baseline

In a traditional A/B test, statistical significance is calculated relative to the performance of one baseline experience. MABs continuously make these decisions throughout the experiment’s lifetime. They explicitly evaluate the tradeoffs between all variations at once instead of continuously comparing performance to baseline experience as a reference. MABs help validate your optimized variations and confirm whether to deploy a winning variation or stay with the control.

Improvement over original

An improvement over the original is an estimate of the gain in total conversions compared to delivering all traffic to the original variation.

To calculate it, Optimizely Experimentation examines the cumulative average conversions per visitor for each variation. It then multiplies the original's conversion rate by the total number of visitors in the test and compares this to the observed conversion counts.

Algorithms powering Optimizely Experimentation's MAB

Optimizely uses a different algorithm based on the type of metric:

Binary metrics

Optimizely Experimentation uses a Bayesian MAB procedure called Thompson Sampling [Russo, Van Roy 2013]. For each variation, Optimizely uses the test's current observed number of unique conversions and number of visitors to characterize a beta distribution. Optimizely samples these distributions several times and allocates traffic according to their win ratio.

For example, suppose you have the following results on comparing unique conversions when Optimizely is updating the traffic allocation:

Figure 1. The observed results of the experiment under a Bernoulli metric.

Optimizely characterizes beta distributions, denoted as Beta(𝛼, 𝛽), based on these values, where 𝛼 is the number of unique conversions, and 𝛽 is the number of visitors for each variation. For example, the Original variation would be characterized by Beta.

Figure 2. The resulting Beta distributions are characterized by the results in Figure 1.

Optimizely samples these distributions N times — say 10,000 — recording the distribution that yields the highest value in each round. This simulates what might happen if Optimizely had 10,000 visitors allocated to the Original, 10,000 to Variation #1 and 10,000 to Variation #2. The ratio of times each variation wins determines the percentage of new visitors allocated to it:

Figure 3. Result of Thompson Sampling where N=10000.

Optimizely Experimentation runs Thompson Sampling again on the next iteration with the updated observations.

Numeric metrics

Optimizely Experimentation uses the Epsilon Greedy procedure, where a small fraction of traffic is uniformly allocated to all variations, and a large amount is allocated to the variation with the highest observable mean.

Set up an MAB optimization in Optimizely Experimentation

Create an MAB optimization in Optimizely Web Experimentation

- Go to Experiments and click Create New.... In Optimizely Personalization, go to Optimizations and click Create New.... You can also set the Distribution Mode as MAB for personalization campaigns. See the FAQ below.

- Select Multi-Armed Bandit.

- Give your MAB a name, description, and URL to target, then click Create Bandit.

- Create at least two variations in the Visual Editor.

-

Click Metrics to choose your primary metric. Your MAB uses the primary metric to determine how traffic is distributed across variations.

After you start your MAB, you cannot change the primary metric. - Test your MAB.

- Click Start Multi-Armed Bandit to launch your optimization.

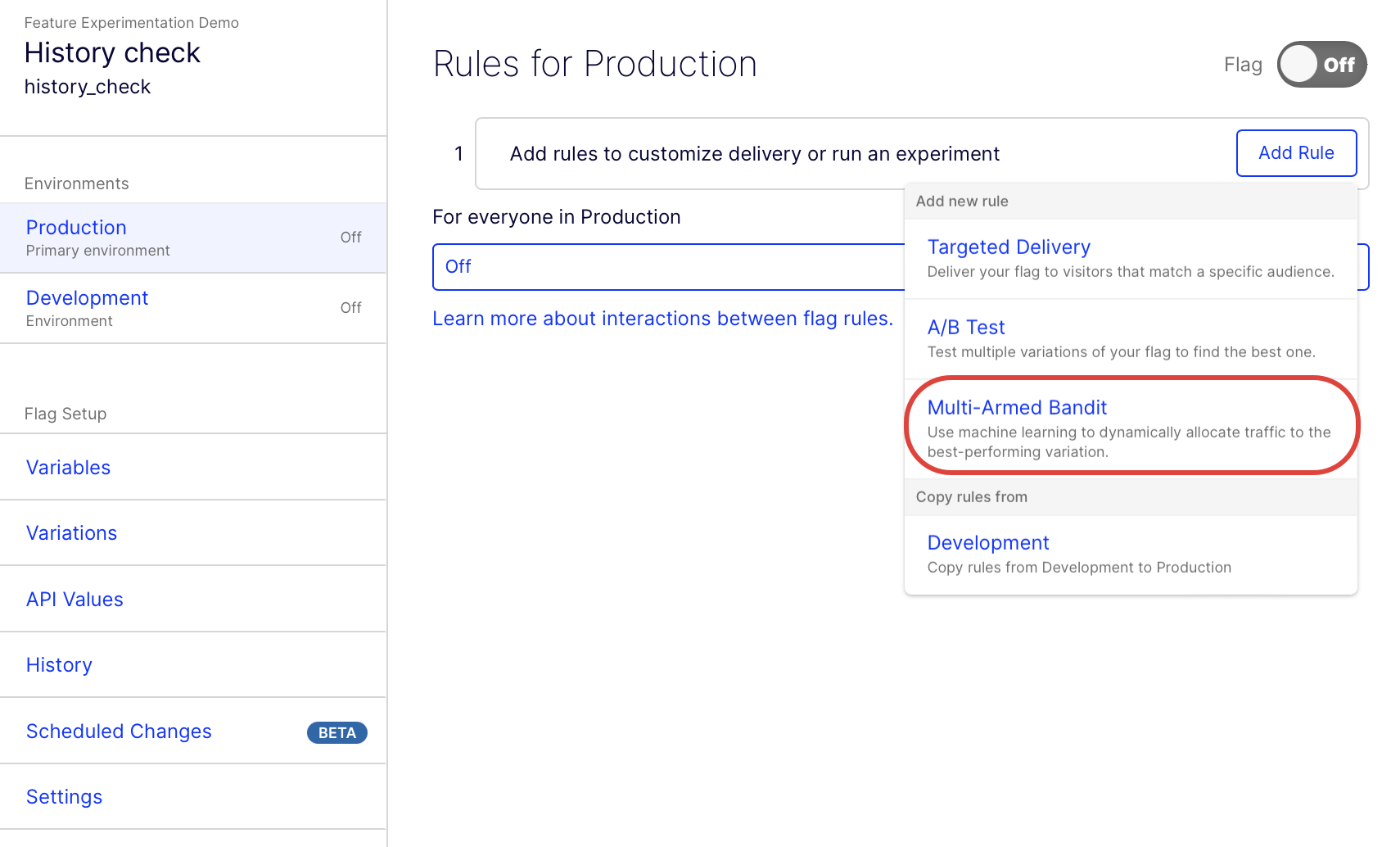

Create an MAB optimization in Optimizely Feature Experimentation

See Run a multi-armed bandit optimization to create an MAB flag rule in the Optimizely application and implement it in your code using the Feature Experimentation SDKs.

MAB optimization over A/B testing: a demonstration

In the following head-to-head comparison, simulated data is sent to both an A/B test with fixed traffic distribution and a MAB optimization. Traffic distribution over time and the cumulative count of conversions for each mode are both observed. The true conversion rates driving the simulated data are:

- Original – 50%

- Variation 1 – 50%

- Variation 2 – 45%

- Variation 3 – 55%

The MAB algorithm indicates that Variation 3 is higher performing from the start. Even without any statistical significance information for this signal (remember, the multi-armed bandit does not show statistical significance), it still begins to push traffic to Variation 3 to exploit the perceived advantage and gain more conversions.

The traffic distribution remains fixed for the ordinary A/B experiment to quickly arrive at a statistically significant result. Because fixed traffic allocations are optimal for reaching statistical significance, MAB-driven experiments generally take longer to find winners and losers than A/B tests.

By the end of the simulation, the MAB optimized the experiment to achieve roughly 700 more conversions than if traffic was held constant.

FAQs

Does the multi-armed bandit algorithm work with multivariate tests and Web Personalization?

Yes. To use an MAB in a multivariate test (MVT), select Partial Factorial. Under Distribution Mode, select Multi-armed Bandit.

In Optimizely Personalization, you can apply an MAB on the experience level. This works best when you have two variations aside from the holdback.

How often does the MAB make a decision?

The MAB model is updated hourly.

Why is a baseline variation listed on the results page for my MAB campaign?

In MVT and Web Personalization, your Results page still designates one variation as a baseline. However, this designation does not mean anything because MABs do not measure success relative to a baseline variation. It is just a label that has no effect on your experiment or campaign.

You should not see a baseline variation in the results page when using a MAB with an experiment in Optimizely Web Experimentation or Optimizely Feature Experimentation.

What happens if I change my primary metric?

If you change the primary metric mid-experiment in a Web Experimentation MVT or Web Personalization, the MAB begins optimizing for the new primary metric instead of the one you originally selected. For this reason, do not change the primary metric once you begin the experiment or campaign.

You cannot change your primary metric in Optimizely Web Experimentation or Optimizely Feature Experimentation once your experiment has begun. See Why you should not change a running experiment for information.

What happens when I stop or pause a variation?

If you pause or stop a variation, the MAB ignores data from those variations when it adjusts traffic distribution among the remaining live variations. But there are side effects you should be aware of. If you change variations mid-experiment, the MAB needs to re-optimize its strategy and adapt the traffic accordingly. Additionally, doing so will add weeks for an experiment to complete. Also, when the swapping occurs, the original bandit model gets destroyed. The MAB has to start from scratch to optimize for the reduction in variations. Because of this, do not change variations mid-experiment. See Why you should not change a running experiment for information.

How do MABs handle conversion rates that change over time and Simpson's Paradox?

Optimizely Experimentation uses an exponential decay function that weighs recent visitor behavior more strongly to better adapt to the effect of time variation more quickly. This approach gives less weight to earlier observations and more weight to recent ones.

Also, Optimizely Experimentation reserves a portion of traffic for pure exploration so that time variation is easier to detect.

Will the traffic allocation always be updated?

The bandit algorithm used by MABs eventually converges. This means that, eventually, the bandit algorithm determines the optimal traffic split among the variations in the experiment. It will no longer update the traffic split between variations. All bandit algorithms are working towards that goal.

Please sign in to leave a comment.